Science quantifies the uncertainty in its data and conclusions.

If you come across data that claims to be completely accurate, should you trust it?

| This is one of ScienceOrNot’s Hallmarks of science. See them all here. |

In short…

Nothing is certain in science. Every scientific measurement must include an indication of its margin of error.

If you thought that science was certain – well, that is just an error on your part.

Richard Feynman, American physicist, 1964

What is meant by uncertainty?

No measurement is completely accurate or precise. There is always some uncertainty about how close it is to the real value. Consequently, all conclusions in science have some uncertainty because they are based on real-world measurements.

Sometimes uncertainty is called ‘error’.

Where does uncertainty come from?

Uncertainties originate in measurements. Measurement errors can come from inaccurate instruments (systematic error), quirks in the techniques of the measurer or natural variability (random uncertainty). In statistical tests, uncertainty comes from the fact that only a small sample of the whole picture can be subjected to measurement (sampling error).

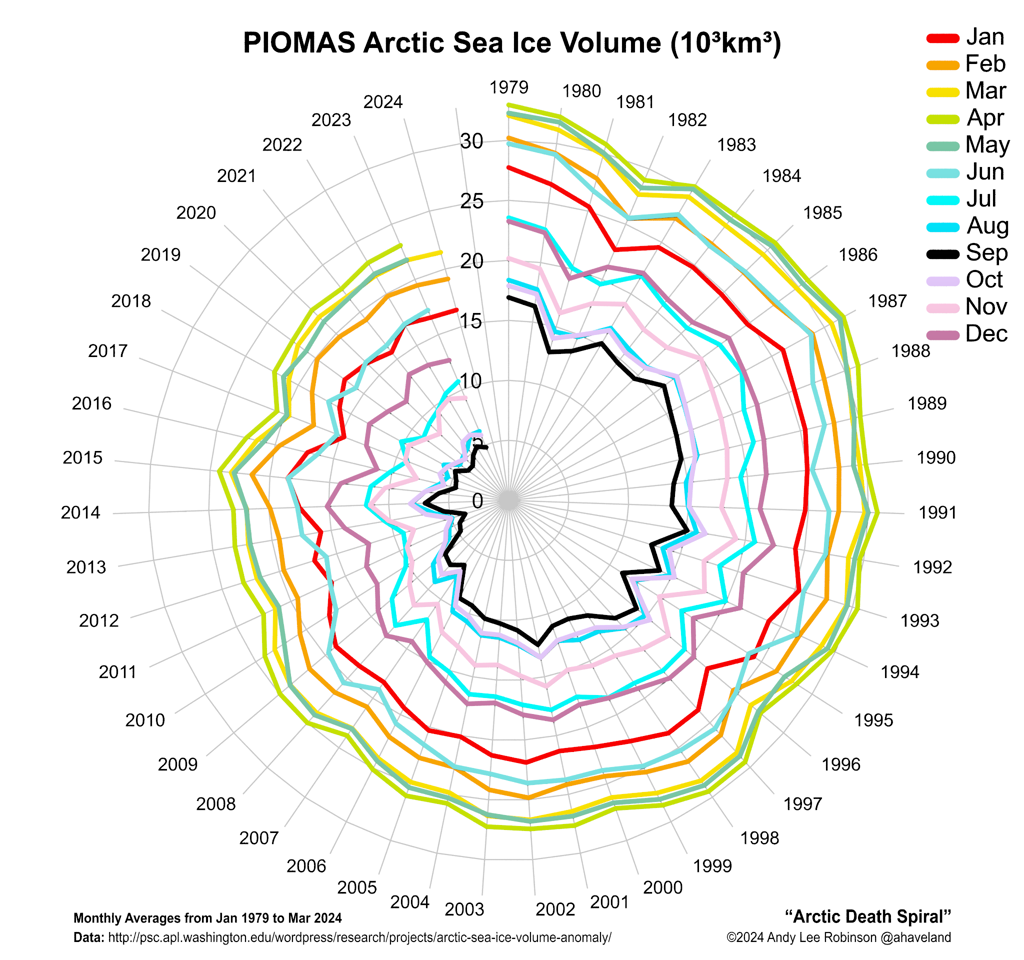

Uncertainties flow through in the analysis of data, so all conclusions from scientific investigations have uncertainties too.

How uncertainty is quantified.

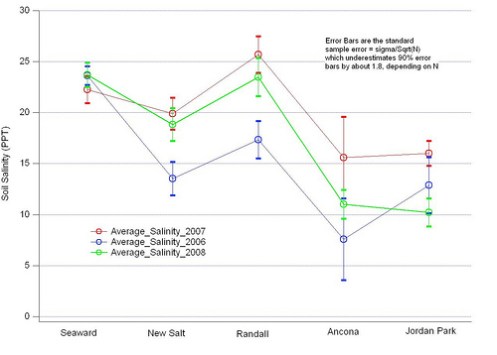

No scientific measurement is complete unless it is accompanied by a figure specifying the uncertainty. The uncertainty can be shown as an absolute value (7.5 ± 0.3 kg) or a percentage (7.5 kg ± 4%). In graphs of data, uncertainties are often shown by error bars.

In statistical measurements, the uncertainty is known as the confidence interval. It shows the range of values that could be possible at a particular confidence level. The default confidence level is 95%, meaning that there is only a 5% chance that the real result could be outside the range. The term ‘statistically significant’ typically refers to this 95% confidence level (2 standard deviations either side of the mean value).

Uncertainties are carried through in calculations and other analysis, so all derived quantities must include an uncertainty figure.

Why uncertainty needs to be quantified.

Some people believe that because science emphasises accuracy and precision, its measurements and conclusions are exact. This can never be the case, and scientists must always ensure that they communicate how much confidence we can have in their conclusions.

Bogus science tends to rely on anecdotal evidence and sidesteps the idea of uncertainty. The more blatant scammers like to claim that whatever they are promoting has been “scientifically proven“, as if the possibility of uncertainty does not exist.

Examples

- In 1988, the shroud of Turin was radiocarbon dated by three separate laboratories. At the 68% confidence level (1SD), the three measured ages for the shroud were 646 ± 31 years, 750 ± 30 years and 676 ± 24 years.

- In its Fourth Assessment Report, the Intergovernmental Panel on Climate Change used these terms and to express the confidence levels in statistical results: virtually certain >99%; extremely likely >95%; very likely >90%; likely >66%; more likely than not > 50%; about as likely as not 33% to 66%; unlikely <33%; very unlikely <10%; extremely unlikely <5%; exceptionally unlikely <1%.

- Garmin estimates that its newer GPS receivers, which include Wide Area Augmentation System, give locations that are accurate to within 3 metres.

Image attribution: ![]() Soil Salinity 2006 2007 2008 standard error bars by JCS Ecology OOB http://www.flickr.com/photos/30783966@N06/3300952489/

Soil Salinity 2006 2007 2008 standard error bars by JCS Ecology OOB http://www.flickr.com/photos/30783966@N06/3300952489/

Richard Feynman’s quote is from The Character of Physical Law, The 1964 Messenger Lectures, BBC, 1965

The error bars cartoon is by ~Velica on deviantART

| This is one of ScienceOrNot’s Hallmarks of science. See them all here. |

Reviewed: 2013/09/10