Science red flags

These red flags are indicators of either bad science or unscientific nonsense. The more of them you can identify in a claim, the less reliable it will be. They should be compared to the Hallmarks of science, which indicate science that’s conducted properly.

You think seeing is believing, that your thoughts are always based on reasonable intuitions and rational analysis, and that though you may falter and err from time to time, for the most part you stand as a focused, intelligent operator of the most complicated nervous system on earth. You believe that your abilities are sound, your memories perfect, your thoughts rational and wholly conscious … The truth is that your brain lies to you. Inside your skull is a vast and far-reaching personal conspiracy to keep you from uncovering the facts about who you actually are, how capable you tend to be, and how confident you deserve to feel. That undeserved confidence alters your behaviour and creates a giant, easily opened back door through which waltz con artists, magicians, public relations employees, advertising executives, pseudoscientists, peddlers of magical charms, and others.

David McRaney, You Are Now Less Dumb, 2013

Use these links to find out more about each of the science red flags. (This list will grow as new red flags are posted on the blog.)

|

The ‘scientifically proven’ subterfuge. | Scammers and deniers use two forms of this tactic:

|

|

Persecuted prophets and maligned mavericks: The Galileo Gambit. | Users of this tactic will try to persuade you that they belong to a tradition of maverick scientists who have been responsible for great advances despite being persecuted by mainstream science. |

|

Empty edicts – absence of empirical evidence | This tactic shows up when people make claims in the form of bald statements – “this is the way it is” or “this is true” or “I know/believe this” or “everybody knows this” – without any reference to supporting evidence. |

|

Anecdotes, testimonials and urban legends | Those who use this tactic try to present stories about specific cases or events as supporting evidence. The stories range from personal testimonials, to anecdotes about acquaintances, to tales about unidentifiable subjects. |

|

Charges of conspiracy, collusion and connivance | Conspiracy theorists usually start by targeting weaknesses in an accepted model, then propose a conspiracy that explains why their ‘better’ model has been suppressed. Although there can be overwhelming evidence favouring the accepted model, they claim that this simply means the conspiracy has been successful. |

|

Stressing status and appealing to authority | People who use this tactic try to convince you by quoting some ‘authority’ who agrees with their claims and pointing to that person’s status, position or qualifications, instead of producing real-world evidence. The tactic is known as the argument from authority. |

|

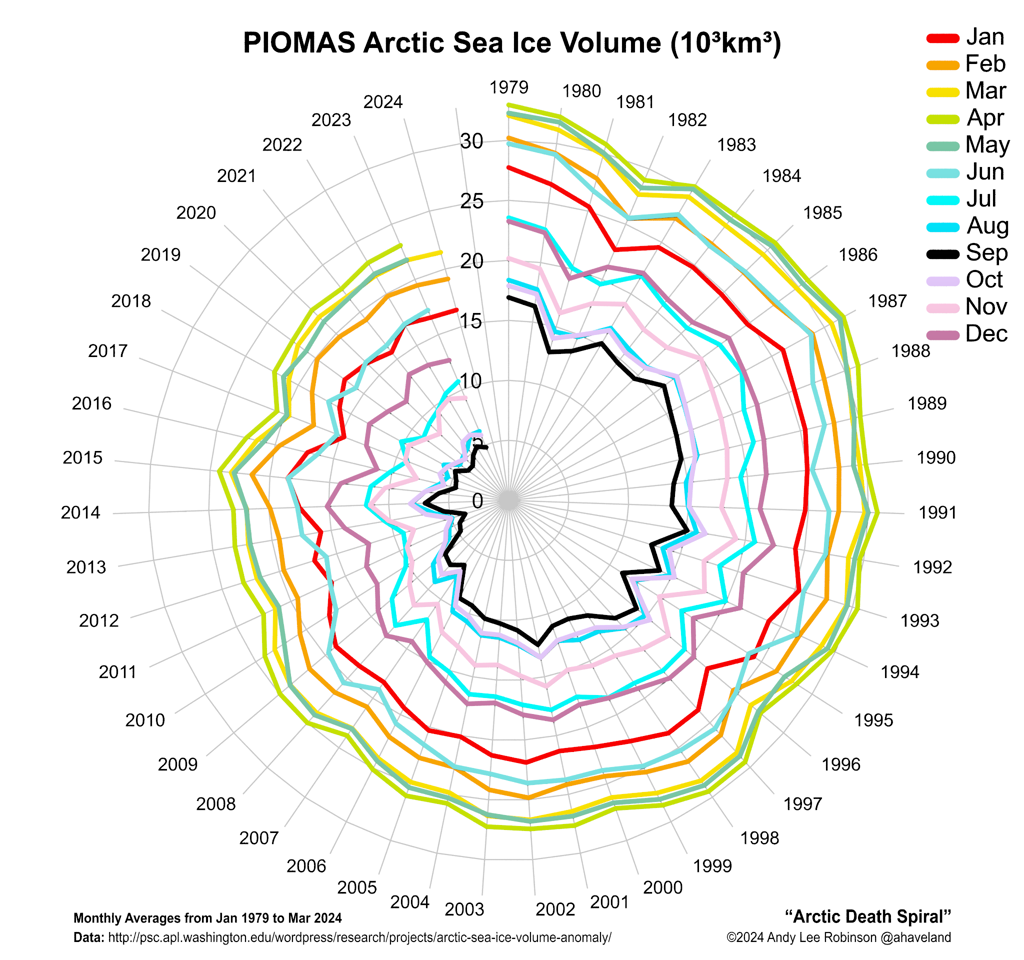

Devious deception in displaying data: Cherry picking | In cherry-picking, people use legitimate evidence, but not all of the evidence. They select segments of evidence that appear to support their argument and hide or ignore the rest of the evidence which tends to refute it. |

|

Repetition of discredited arguments – parroting PRATT | In this tactic, people persist in repeating claims that have been shown over and over to have no foundation. Look for slogans, sweeping statements or claims that look as though they could easily be refuted. |

|

Duplicity and distraction – false dichotomy | In this tactic, people assert that there are only two possible (and usually opposite) positions to choose from, when in fact there are more. They try to argue that if one position is shown to be false, then the other must be correct. |

|

Wishful thinking – favouring fantasy over fact | We all fall victim to this tactic because we use it on ourselves. We like to believe things that conform with our wishes or desires, even to the extent of ignoring evidence to the contrary. |

|

Appeals to ancient wisdom – trusting traditional trickery | People who use this tactic try to persuade you that a certain explanation, treatment or model must be correct because it’s been around for a long time. |

|

Technobabble and tenuous terminology: the use of pseudo scientific language | In this tactic, people use invented terms that sound “sciencey” or co-opt real science terms and apply them incorrectly. |

|

Confusing correlation with causation: rooster syndrome | This is the natural human tendency to assume that, if two events or phenomena consistently occur at about the same time, then one is the cause of the other. Hence “rooster syndrome”, from the rooster who believed that his crowing caused the sun to rise. |

|

Straw man: crushing concocted canards | When this tactic is used, it’s always in response to an argument put up by an opponent. Unable to come up with a reasoned response, the perpetrator constructs a distorted, incorrect version (the “straw man”) of the opponent’s argument, and then proceeds to tear it to shreds. |

|

Indelible initial impressions: the anchoring effect | Anchoring is the human tendency to rely almost entirely on one piece of evidence or study, usually one that we encountered early, when making a decision. |

|

Perceiving phoney patterns: apophenia | This happens when you convince yourself, or someone tries to convince you, that some data reveal a significant pattern when really the data are random or meaningless. |

|

Esoteric energy and fanciful forces. | This tactic is easy to pick because people who use it try to convince you that some kind of elusive energy or power or force is responsible for whatever effect they are promoting. |

|

Banishing boundaries and pushing panaceas – applying models where they don’t belong | Those who use this tactic take a model that works under certain conditions and try to apply it more widely to circumstances beyond its scope, where it does not work. Look for jargon, sweeping statements and vague, rambling “explanations” that try to sound scientific. |

|

Averting anxiety with cosmic connectivity: magical thinking | Magical thinking is present when anyone argues that everything is connected: thoughts, symbols and rituals can have distant physical and mental effects; inanimate objects can have intentions and mystical influences. Often, the connectivity is supposedly mediated by some mysterious energy, force or vibration and there is much talk of holism, resonance, balance, essences and higher states. |

|

Single study syndrome – clutching at convenient confirmation | This tactic shows up when a person who has a vested interest in a particular point of view pounces on some new finding which seems to either support or threaten that point of view. It’s usually used in a context where the weight of evidence is against the perpetrator’s view. |

|

Appeal to nature – the authenticity axiom | You are expected to accept without question that anything ‘natural’ is good, and anything ‘artificial’, ‘synthetic’ or ‘man-made’ is bad. |

|

The reversed responsibility response – switching the burden of proof | This tactic is usually used by someone who’s made a claim and then been asked for evidence to support it. Their response is to demand that you show that the claim is wrong and if you can’t, to insist that this means their claim is true. |

|

The scary science scenario – science portrayed as evil. | The perpetrators try to convince you that scientific knowledge has resulted in overwhelmingly more harm than good. They identify environmental disasters, accidents, human tragedies, hazards, weapons and uncomfortable ideas that have some link to scientific discoveries and claim that science must be blamed for the any damage they cause. They may even go so far as claiming that scientists themselves are generally cold, unfeeling people who enjoy causing harm. |

|

False balance – cultivating counterfeit controversy to create confusion | This tactic is promoted by peddlers of bad science and pseudoscience and is often taken up by journalists and politicians. In discussing an issue, they insist that “both sides” be presented. Many journalists routinely look for a representative of each “side” to include in their stories, even though it might be inappropriate. Groups or individuals who are pushing nonsense or marginal ideas like to exploit this tendency so that their point of view gains undeserved publicity. |

|

Confirmation bias – ferreting favourable findings while overlooking opposing observations | This is a cognitive bias that we all suffer from. We go out of our way to look for evidence that confirms our ideas and avoid evidence that would contradict them.. |

|

Crafty contrarians and wily watchdogs – donning the mantle of shrewdness | This is an attitude adopted by a person – and it’s usually an older male – who has achieved success within his profession. This person feels entitled to make pronouncements about areas in which he has no competence. He believes he has developed a knack for making good judgements based on ‘intuition’ or ‘gut feeling’ and you are expected to respect his opinions because of his reputation for astuteness. His opinions are usually at odds with the accepted science. |

|

The appeal to common sense – garbage in the guise of gumption | The perpetrator tries to persuade you to accept or reject a claim based on what’s supposedly “common sense”. Look out for key words such as “Obviously, …”, “Naturally, …”, “Everyone knows …” or “It goes without saying that …”. |

|

Ostensible oppression of opposing opinions – claims of rights violated. | In this tactic, people insist that their right to express their opinion, or their right to free speech, is being denied. This is their reaction to having their opinions dismissed, rejected or ignored by mainstream scientific forums. They refuse to accept that their opinions fail because they do not meet the standards for publication in those forums. |

|

The alarmism accusation – claims of crises created to funnel funding. | Those who use this tactic insist that the current scientific consensus on some issue is corrupt. This, they claim, is because a group of scientists has colluded to hype the position which favours its own interests. The purported motive is to attract funding for their research. Look for derisive terms such as “follow the money” or “pal review”. |